Bottom Line: Nextdoor promises a digital town square but often delivers a digital battleground. Its utility for practical neighborhood logistics is undeniable, yet it remains perpetually hamstrung by inconsistent moderation and a user experience that hinges entirely on the whims of your actual neighbors.

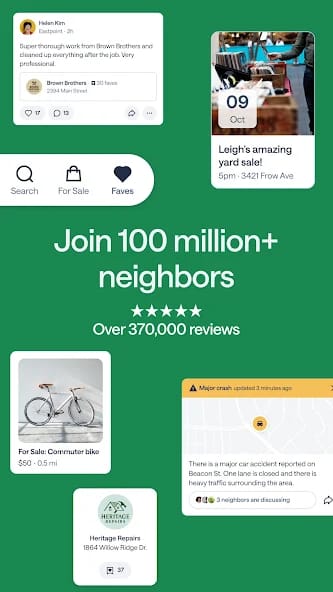

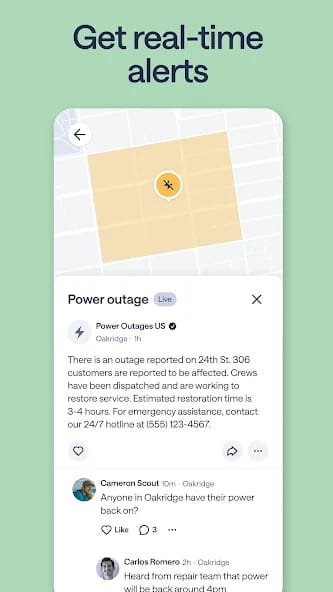

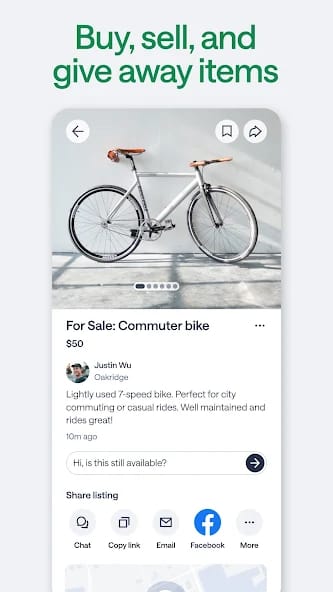

Nextdoor doesn't have a user experience problem so much as it has a human nature problem, which its platform design seems to amplify as often as it helps. The core loop is simple: log in, scroll the feed, and engage with posts about lost dogs, package thieves, or pleas for a good electrician. When it works, it feels like a civic superpower. Finding a last-minute babysitter or getting a dozen eyewitness accounts of a local power outage provides a sense of tangible, networked community that larger platforms can't replicate. The utility is sharp, focused, and immediate. The recommendations feature, in particular, remains a powerful tool for navigating the often-opaque world of local services.

However, the platform's structural integrity is brittle, resting entirely on the social cohesion of the neighborhood it serves. Nextdoor's greatest strength—its locality—is also its most profound weakness. A neighborhood prone to suspicion and conflict will produce a Nextdoor feed to match. The infamous "suspicious person" post, often tinged with racial bias, became such a stereotype for a reason. The company has made efforts to curb this through features that force users to slow down and consider the implications of their posts, but the fundamental architecture still lends itself to a digital curtain-twitching that can feel more divisive than cohesive.

Moderation is the platform's Achilles' heel. While Nextdoor sets the terms of service, enforcement is largely delegated to volunteer local moderators. The result, as reflected in a sea of user complaints, is a system that can feel arbitrary and opaque. Feuds spill over from backyard fences to the digital feed, with moderation disputes becoming a meta-narrative that distracts from the platform's purpose. Being suspended from Nextdoor for a poorly interpreted comment feels less like a corporate action and more like being shunned by a homeowners' association you never asked to join. This reliance on volunteer labor for a task as sensitive as content moderation is a critical design flaw for a platform of this scale. It creates an environment where governance, not utility, becomes the dominant user experience for those who run afoul of the rules, or the rule-makers.

The recent push into AI-driven content sorting and local news partnerships feels like a necessary, if late, course correction. The goal is to elevate the signal and reduce the noise—to make the feed more about the new restaurant opening and less about disputes over parking spaces. But it's an open question whether an algorithmic fix can solve a deeply human community problem.